Energy-aware Computing and Numerical Linear Algebra

Many problems in scientific computing and mathematical modeling can be reduced to solving large systems of linear equations. Since such systems might be very large (having millions of unknowns), they are often solved on supercomputers. Current supercomputing consist of several hundred thousand of processors, and thus their energy consumption skyrocketed. Therefore, it is a very important problem to optimize energy consumption of scientific computations.

Changing Computing Paradigms Towards Power Efficiency

TOP500 List is a biannually updated list of world's fastest supercomputers, which are measured by the number of FLOPS (floating-point operations per second) in solving a dense system of linear equations using Gaussian elimination. Green500 List tries to solve the problem of energy efficiency by solving the same problem but using a different metric of FLOPS/W.

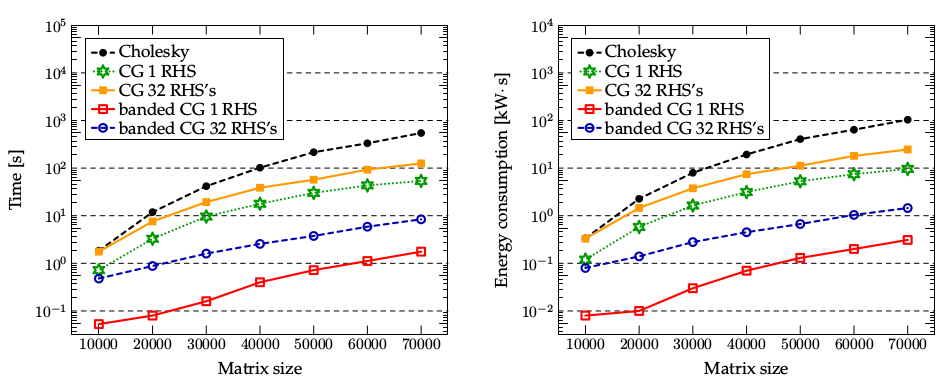

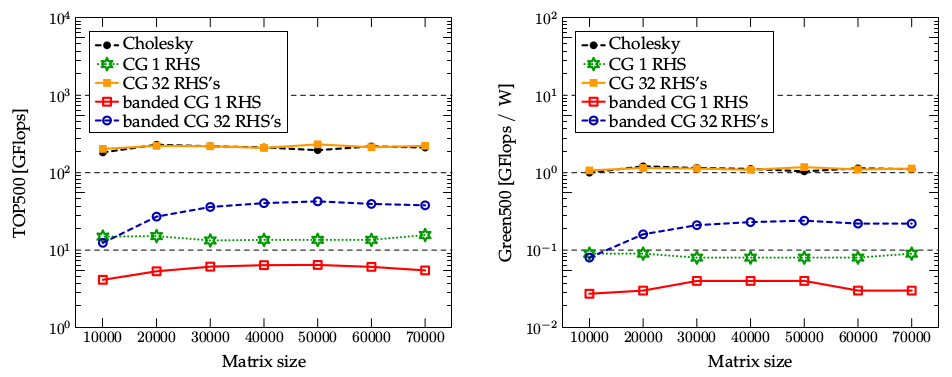

The issue is that both metrics promote power-hungry algorithms instead of other more energy-efficient techniques. By using metrics based on both time-to-solution and energy, it is possible to get a much more realistic picture, which can drive and help the design of future energy-efficient machines and algorithms. In [1], we compare these metrics on an example of solving dense positive definite linear systems using different methods (Cholesky decomposition, CG, specialized iterative method). Our results show clearly that standard metrics are not able to capture the real behaviour of applications, and this is mainly due to the fact that they rank methods and machines on the basis of FLOPS and FLOPS/W rather than real quantities of practical interest, i.e. time-to-solution and energy:

Comparison of different solvers with respect to time-to-solution and energy consumption.

Comparison of different solvers with respect to time-to-solution and energy consumption.

Comparison of different solvers with respect to the standard metrics Flops and Flops/W.

Comparison of different solvers with respect to the standard metrics Flops and Flops/W.

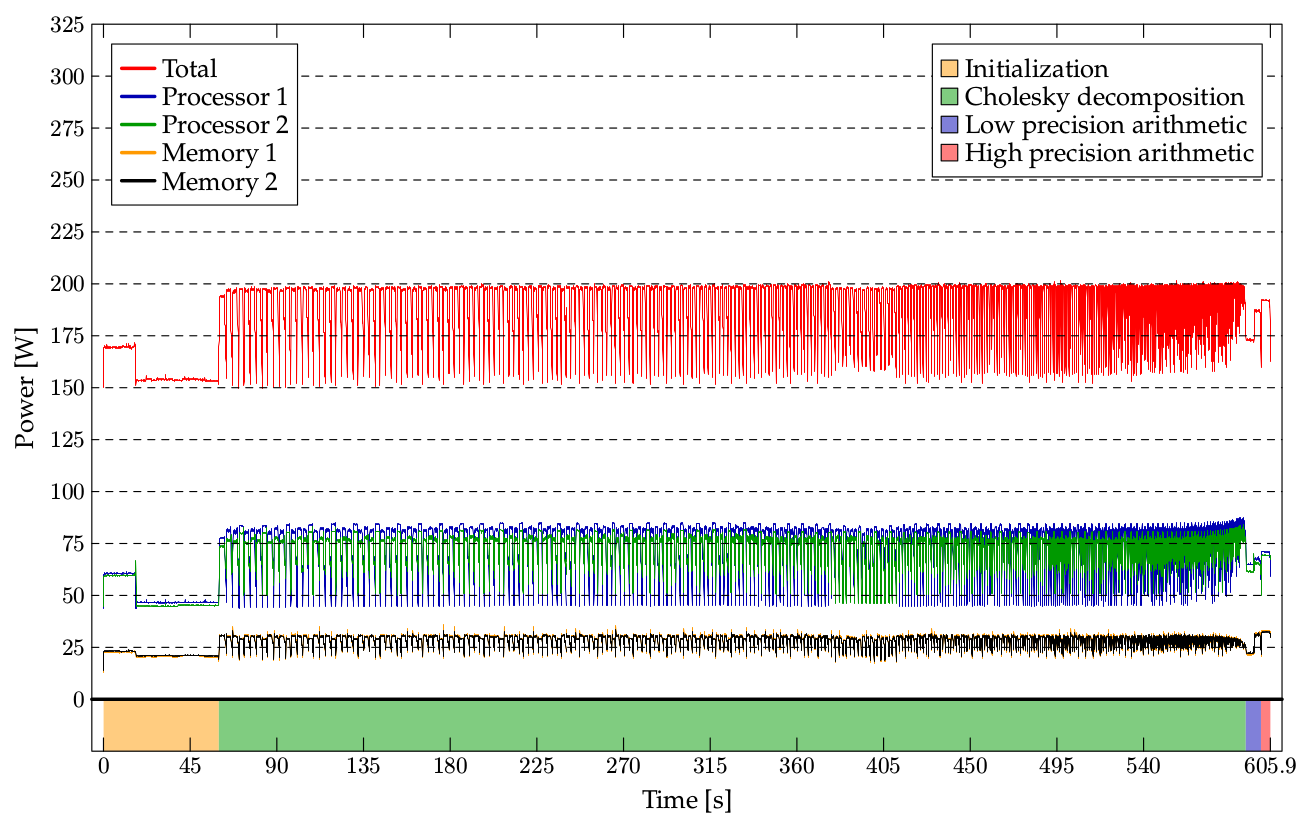

To obtain these data, we develop a fully automated, online, minimally invasive system for measuring power performance on IBM POWER7. Each POWER7 chip has several embedded thermal sensors that measure the temperature of the different components. Data from sensors are transferred to a separate service processor that is embedded in the POWER7 server. We get detailed power measurements as shown below:

Power consumption of solving a positive definite linear system of size 70000x70000 using Cholesky

decomposition on IBM POWER7.

Power consumption of solving a positive definite linear system of size 70000x70000 using Cholesky

decomposition on IBM POWER7.Approximative Iterative Refinement

Wilkinson's iterative refinement (IR) allows to solve a linear system in high precision while performing most of computational operations in low precision. Let x = 0 be the initial solution and r = b. It works in three steps which are repeated till r is small enough:

- Find an approximative solution to Az=r.

- Update the solution x := x+z.

- Recompute the residue r := b - Ax.

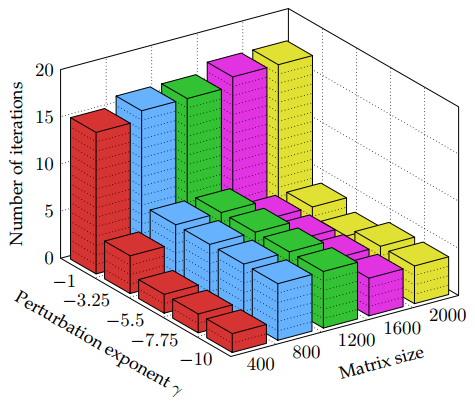

It was pointed out by Bekas et al. that a relatively low accuracy of z in step 1 leads to a similar number of iterations as high accuracy:

The number of iterations to solution of a perturbed Cholesky algorithm, for a range of matrix sizes

and perturbation exponents.

The number of iterations to solution of a perturbed Cholesky algorithm, for a range of matrix sizes

and perturbation exponents.In [1], we introduce something called an approximative iterative refinement (AIR). It is a modification of IR, solving in step 1 a linear system A'z=r where A' is some approximative matrix. In the standard IR, we may view A' as the low precision approximation of A. Different class of solvers can be obtained when A' contains the key structural elements of A. In [1], we solved model dense linear systems with decaying structure (with largest values around the diagonal). Then a good approximation A' is the choice of a banded submatrix of A, which leads to finding the solution extremely fast.

Also, we have found the surprising connection to the splitting methods. When A = S-T, then Ax=b leads to Sx=Tx+b, or x = S-1Tx+S-1b. The splitting methods iterate the last equation as xn+1 = S-1Txn+S-1b. The surprising connection is that the splitting methods are precisely AIR with A'=S. This allows to give a clear explanation of the splitting methods, which I was using for several years in my advance linear algebra classes.

Selected publications

- Pavel Klavík, A. Cristiano I. Malossi, Constantin Bekas, and Alessandro Curioni: Changing Computing Paradigms Towards Power Efficiency. Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences 372(2018), 2014. Links: arXiv, journal link.